Google API Key Vulnerability Exposes Private Data Through Gemini

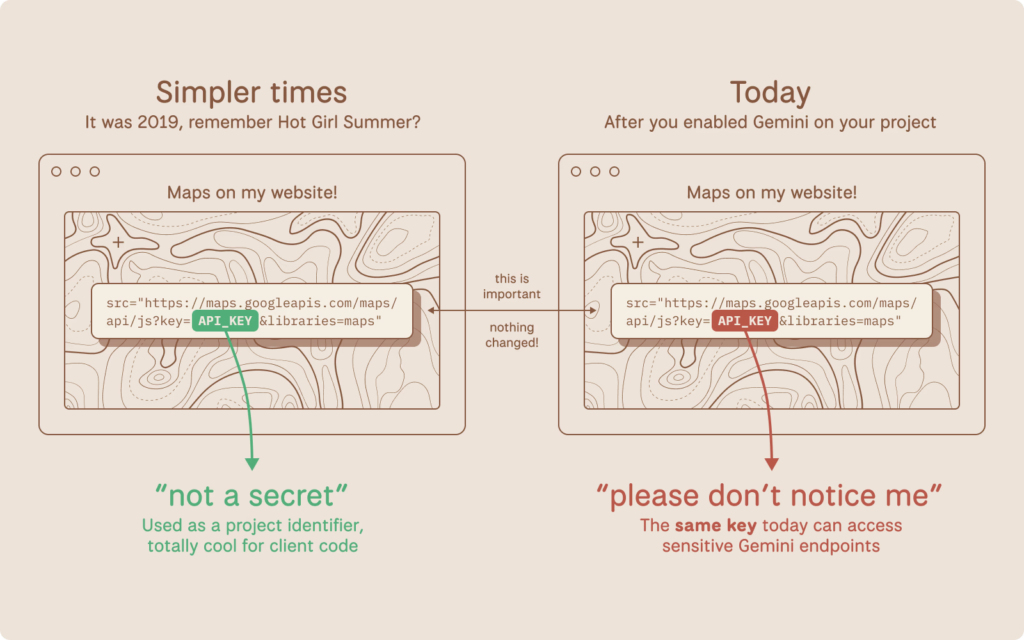

Google’s long-standing advice to developers that API keys for services like Maps or Firebase are safe to embed in public websites has backfired dramatically with the rise of Gemini.

A new privilege escalation flaw turns these “non-secret” keys into gateways for attackers to raid private files, rack up massive bills, and disrupt services. Scanning millions of sites uncovered nearly 3,000 exposed keys ripe for abuse, including some from Google’s own pages.

Google API Brokedown

For over a decade, Google positioned API keys (starting with “AIza…”) as simple project identifiers for billing and usage tracking, not authentication secrets.

Firebase’s security guide explicitly calls them “not secrets,” and Maps docs urge pasting them straight into HTML. Restrictions like HTTP referrers add a layer, but they’re client-side and often bypassed.

Enter Gemini, Google’s generative AI via the Generative Language API. Enabling it on a Google Cloud project quietly grants existing API keys access to sensitive endpoints.

No alerts, no opt-in, just instant escalation. A key baked into your site’s JavaScript for a map widget three years ago? Now it unlocks Gemini files, cached data, and AI inference, billing straight to your account.

This isn’t user error; it’s retroactive power creep. Keys designed for public use can morph into credentials without warning, violating secure-by-default principles (think CWE-1188 for insecure defaults and CWE-269 for bad privilege assignment).

Attack in Three Clicks

- View page source → Copy

AIzaSy...from the Maps script. - Test:

curl "https://generativelanguage.googleapis.com/v1beta/models?key=YOUR_KEY" - If it returns a 200 OK with model lists (like gemini-2.0-flash), jackpot.

- Data theft: Hit

/v1beta/filesor/cachedContentsfor uploaded docs, datasets, or conversation histories. - Bill shock: Spam Gemini calls scale fast with context size, hitting thousands per key daily.

- Denial of service: Burn quotas, crippling your legit AI apps.

No server access needed. Just public scraping.

Scale of the Problem: 2,863 Exposed Keys

Using the November 2025 Common Crawl dataset (700 TiB of web snapshots), researchers found 2,863 live keys vulnerable to this. Not fringe sites victims span banks, security firms, recruiters, and Google itself.

| Category | Example Victims | Risk Level | Keys Found |

|---|---|---|---|

| Financial Institutions | Major banks, fintech apps | High (PII exposure) | 847 |

| Tech/Security Cos | Cybersecurity vendors | Critical (irony) | 512 |

| Enterprises | Global recruiters, e-commerce | High (billing abuse) | 1,204 |

| Google Properties | Public product pages (pre-2023) | Confirmed PoC | 300+ |

Google’s own keys, archived since February 2023 on non-AI pages and authenticated to Gemini’s /models endpoint, prove the issue affects even pros.

Disclosure and Google’s Response

Reported November 21, 2025, via Google’s VDP, it started as “intended behavior.” Pushing with Google-hosted examples flipped the script: reclassified as a Tier 1 “Single Service Privilege Escalation, READ” bug by January 13, 2026. Google got the full key list, rolled out leaked-key blocking for Gemini, and plans:

- Scoped new keys (Gemini only by default in AI Studio).

- Proactive alerts for leaks.

- Root fix underway (as of Feb 2026).

Broader AI Risks

This isn’t isolated. Stripe’s publishable keys remained public-safe until bolt-on features expanded their scope. OpenAI faced similar leaks pre-key rotation mandates. AI’s “enable and forget” culture amplifies legacy credential traps, and public IDs gain god-mode without fanfare.

Google API Vulnerability: Actionable Steps

- Hunt Gemini: In GCP Console > APIs & Services > Enabled APIs, search “Generative Language API” across all projects.

- Key Inventory: Credentials tab → Flag unrestricted keys or those allowing Generative Language API.

- Exposure Check: grep repos/sites for

AIzaSy. Test live status with TruffleHog: texttrufflehog filesystem /your/code --only-verified --entropy=falsePrioritize old keys (pre-Gemini era). - Rotate & Restrict: Nuke exposed ones. Set API specific limits; use service accounts for sensitive work.

- Monitor: Enable billing alerts; scan CI/CD with secrets tools.

Separatethe publishable client-side) from the secret keys religiously. Tools like GitGuardian or custom crawlers help.

This flaw underscores AI’s inheritance woes: yesterday’s safe tokens fuel tomorrow’s breaches. Google’s moving, but devs must act first; your Maps embed could be tomorrow’s headline.